How machine-learning recommendations influence clinician treatment selections: the example of the antidepressant selection

This post summarizes our research paper published in Translational Psychiatry.

Machine learning and AI may transform healthcare, but little is known about how clinicians’ treatment decisions will be influenced by machine learning recommendations and explanations. Using a factorial experiment with 220 clinicians, we studied:

- How do correct and incorrect machine learning (ML) recommendations influence clinicians’ antidepressant selection?

- How do different types of supporting explanations for the recommendation influence treatment selection?

Our results challenge assumptions that clinicians interacting with machine learning tools will perform better than if either the the clinicians or ML algorithms were acting individually.

Why focus on antidepressant treatment selection?

The possibility of improving treatment outcomes in major depressive disorder using ML has received a great deal of attention in recent years. Currently, clinicians and patients often rely on a process of trial and error to find an effective treatment. Finding an effective treatment is challenging for a number of reasons, including heterogeneous symptoms and drug tolerability concerns. Currently, an estimated one-third of patients fail to reach remission even after four antidepressant trials.

Here’s what we found:

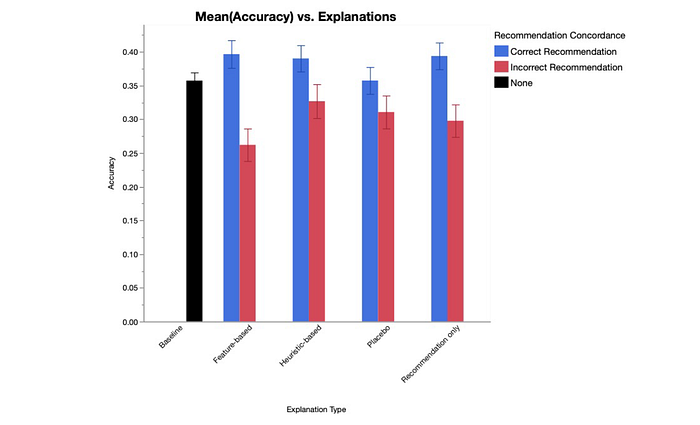

- ML recommendations did not improve treatment decisions. Interacting with ML recommendations did not improve treatment selection accuracy, where accuracy was assessed by psychopharmacology experts. We found no difference between clinician performance when acting independently compared with the clinician–ML collaborative performance.

- Incorrect recommendations can lead to worse decision-making. Interacting with incorrect recommendations did correlate with significantly lower treatment selection accuracy scores compared to correct recommendations and questions with no ML recommendation.

- With correct recommendations, explanations didn’t change decision-making. Pairing explanations with correct ML recommendations had no significant effect on treatment decisions.

- With incorrect recommendations, the design of the explanation matters. When paired with incorrect recommendations, interacting with feature-based explanations correlated with lower accuracy scores compared to the baseline condition. Feature-based explanations included more limited information compared to heuristic-based explanations, which may help to explain the increased use of these explanations and reduced accuracy scores.

What does this all mean?

While clinicians’ acceptance of the technology and the performance of the algorithms are both important, our results suggest that these factors are not enough to be able to predict positive performance outcomes. Evaluation techniques using realistic tasks with the target user are necessary for determining how ML recommendations influence clinical decisions. Below we list some of the key takeaways from this study:

- Our results demonstrate that the implementation of ML tools with high accuracy rates may be insufficient to improve treatment selection accuracy, while also demonstrating the risk of overreliance when clinicians are shown incorrect treatment recommendations.

- Our results show the importance of human factors research and methods in designing ML for clinical decision-making. Design decisions, such as the type of explanation to display, can have significant effects on clinicians’ behavior.

- Our work aligns with recent studies in non-medical domains suggesting that humans interacting with ML tools may perform worse than the algorithm acting independently.

Where do we go from here?

This study helps to demonstrate the potential risks of ML applications and how ML errors may negatively influence clinical decisions.

Future work needs to continue to consider the trade-off between effectiveness and usability in order to optimize for clinician–ML collaboration. As a first step, we ran a series of co-design sessions with primary care providers. The goal of this work was to better understand how intelligent decision support tools may support fit into their daily workflow and what ML information they find most useful. You can read more about this work here.

Want to know more?

For more details about this study, you can find the full research paper here.